Silence on hold isn’t neutral. It signals delay, uncertainty, and in many cases, operational breakdown long before a patient ever steps into a facility.

Front desks weren’t designed for this volume. What used to be a manageable flow of appointment calls has turned into a constant stream of rescheduling, insurance clarification, urgent inquiries, and follow-ups stacked on top of each other without pause.

Something gives eventually. Staff fatigue isn’t just emotional, it’s systemic, and once it sets in, response quality drops, call handling slows, and small inefficiencies start compounding into visible patient dissatisfaction.

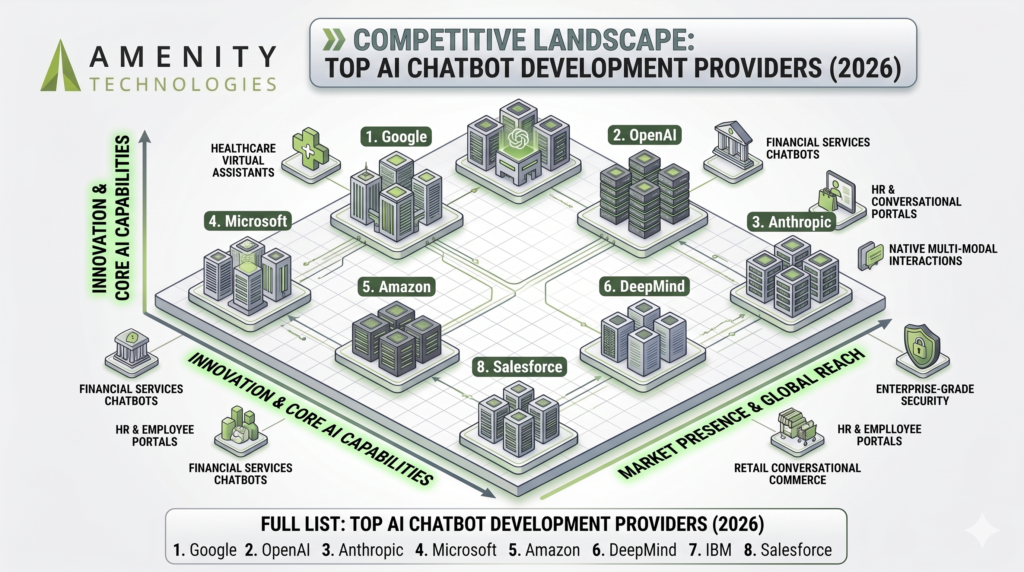

This is where the shift begins. The best voice AI for healthcare front-desk automation 2026 doesn’t enter as a support tool, it replaces the idea that human bandwidth should be the primary control layer for inbound communication. The introduction of smartly built AI voice bots streamlines front-desk operations.

Defining the Intelligence

Conversation is never well-structured or maintained according to the script. Patients circle around symptoms, mix concerns, hesitate mid-sentence, and expect to be understood anyway.

That’s where older systems fail immediately. Decision-tree IVRs require compliance from the caller, while modern AI voice bot systems adapt to the caller instead.

Two capabilities redefine this layer:

Acoustic interpretation

Voice is more than words. Pacing, tone shifts, and interruptions carry signals that Natural Language Understanding (NLU) models now process alongside linguistic input, allowing the system to distinguish urgency from casual inquiry without explicit keywords.

Context stacking

Meaning builds over time. A patient might start with “I need an appointment,” then reveal symptoms later. An intelligent system holds context across the conversation, rather than resetting interpretation with every sentence.

The interaction stops feeling like navigation. It starts feeling like being understood.

The Engineering Behind the Voice

This layer is unforgiving. Voice bot development for healthcare doesn’t tolerate approximation, because every millisecond and every misinterpretation has downstream impact.

HIPAA-compliant latency isn’t just about speed, but about maintaining real-time conversational flow while encrypting, processing, and responding to sensitive voice data within regulated boundaries.

Then comes linguistic noise. Patients rarely describe symptoms cleanly, and regional accents, multilingual switching, and incomplete phrasing introduce ambiguity that generic AI systems simply aren’t trained to resolve.

Underneath that sits orchestration.

- Call input triggers intent parsing

- Intent triggers workflow mapping

- Workflow triggers system actions inside EHR/EMR environments

No visible seams. If the system pauses, repeats, or misroutes, trust erodes immediately.

This is why shallow implementations fail quietly, and why deeply engineered systems behave like infrastructure rather than software.

Workflow Integration

Most inefficiencies are invisible. They live in transitions, between systems, people, and steps that were never designed to connect smoothly.

Voice bots remove those gaps. Not by adding features, but by compressing workflows.

Think of a typical call, then remove friction in between:

- Identity confirmation happens mid-conversation, not as a separate step

- Insurance validation runs in parallel while the patient is speaking

- Appointment slots are filtered dynamically, not pre-listed

- Confirmation writes directly into the system without manual input

It feels simple on the surface. Underneath, multiple systems are synchronizing in real time.

No toggling screens. No “please hold.” There’s just continuity, which turns front-desk operations from reactive to controlled, where demand is absorbed instead of managed manually.

The Patient Perspective

Patients don’t analyze systems. They notice friction.

Waiting amplifies everything. A comparatively small concern feels urgent when there is a slight delay in the process, and a routine appointment request feels like a barrier when access is slow.

Speed resets expectations. A near-instant response from an AI voice bot doesn’t just solve the request, it changes how the entire organization is perceived.

There’s also something less obvious. Consistency. Human interactions vary. Tone, patience, and clarity shift depending on workload, time of day, or staff experience level. Advanced voice AI removes that variability.

With AI in place, every response becomes stable. Every interaction follows the same standard of clarity and pacing.

Patients may not describe it technically. They just experience it as “easier.”

The Financial ROI

Most hospitals don’t notice the loss immediately. It doesn’t show up as a single number, it spreads across small gaps that are easy to ignore until they start stacking.

Take missed calls. One or two in isolation don’t seem critical, but when that becomes a daily pattern, it starts affecting how full the schedule actually is, not just how full it looks on paper.

No-shows tell a similar story. Not always negligence. Sometimes it’s poor timing, sometimes unclear communication, sometimes just a reminder that didn’t land when it should have.

Voice automation doesn’t “fix revenue” directly. That’s not really its role. What it does is remove inconsistency. Calls get picked up. Every time. Peak hours stop behaving like a bottleneck. Patients don’t wait long enough to reconsider or drop off. And confirmations feel different when they happen in conversation instead of a message that can be ignored.

Front-desk teams notice the shift first. Less repetition. Fewer interruptions. More space to handle the cases that actually require attention.

The financial side follows later. Not dramatically, but steadily, and in a way that holds.

Scalability & Training

Things change faster than systems do. That’s usually the problem.

A workflow that works in January starts showing cracks by March. New providers come in, patient volume shifts, certain types of calls increase, and suddenly the system that worked fine during deployment starts needing workarounds.

That’s where most automation falls short. It’s built to handle volume, not variation.

A voice bot in healthcare has to deal with both. Same request, different wording. Same intent, different urgency. Patients don’t repeat themselves in predictable ways.

So the system has to adjust. Not through big updates. Those rarely happen on time.

You’ll notice it in subtle ways:

- Fewer misrouted calls over time

- Faster understanding of commonly used phrases

- Less need for patients to repeat themselves

The interaction starts feeling smoother, almost without anyone pointing out why.

That’s usually the sign that tells it’s working.

Scalability, in this case, isn’t just about handling more calls. It’s about staying accurate while things around it keep shifting, which, in healthcare, they always do.

Choosing Strategy Over Hype

AI is easy to oversell. Demos look smooth, conversations sound polished, and promises tend to focus on surface-level automation. Reality shows up later.

A quick caution. If the system doesn’t align with your workflows on day one, it won’t adapt in production without significant rework.

What actually matters:

- Depth of EHR/EMR integration

- Accuracy of Natural Language Understanding (NLU) in real conversations

- Stability under peak call conditions

- Compliance built into architecture, not layered afterward

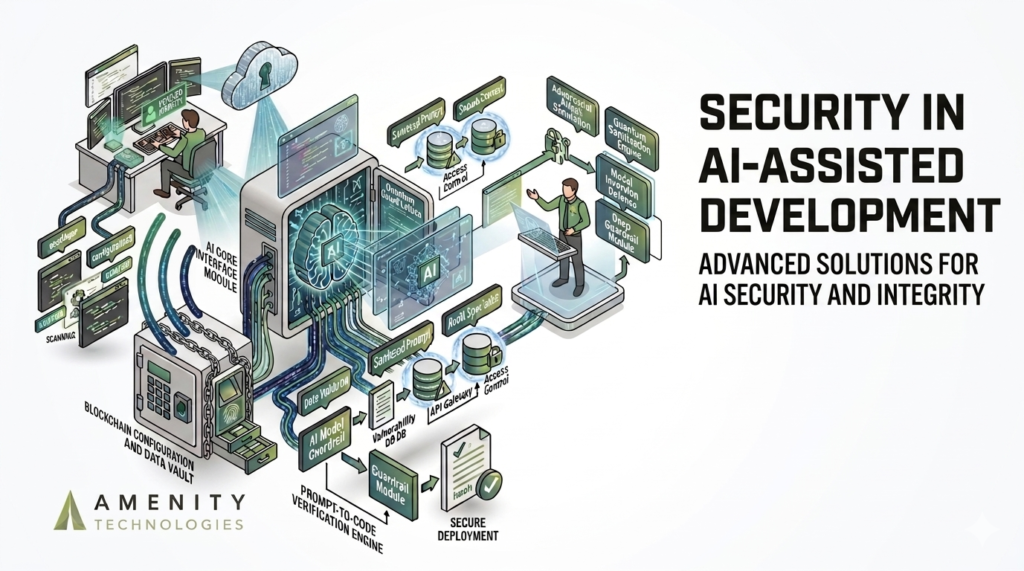

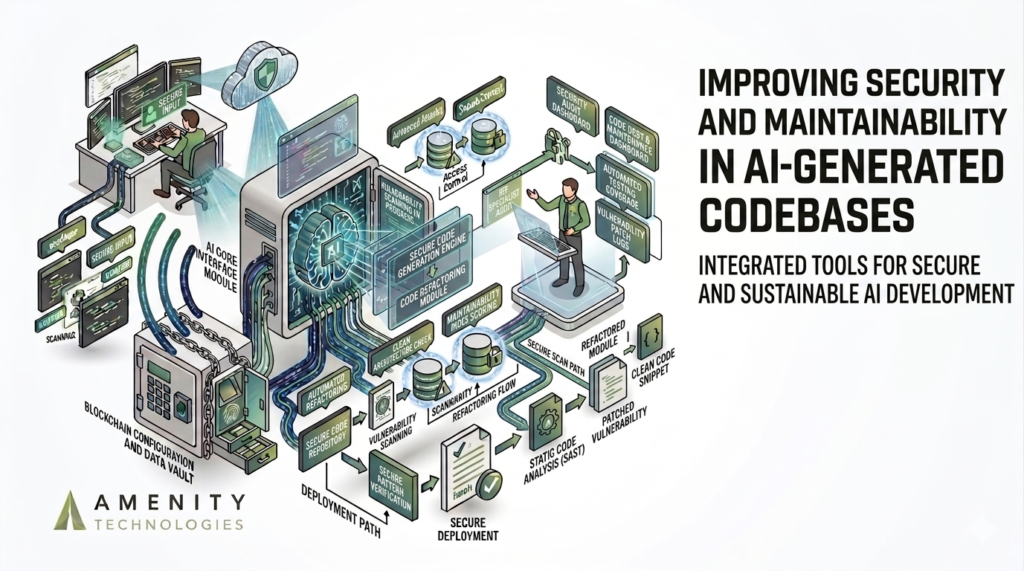

Amenity Technologies focuses on alignment first. Systems are designed around operational reality, not theoretical capability, which is why implementations hold up under real-world pressure instead of controlled scenarios .

And that’s the real decision point. Not whether to adopt an AI voice bot, but whether your front desk continues operating as a bottleneck, or finally becomes a system that can keep up.

FAQs

Q.1. Can an AI voice bot handle complex healthcare requests, or just basic scheduling?

A: Well, that depends on how it’s built. Basic systems handle only appointment booking. Properly engineered voice bots, especially those designed for healthcare, can manage insurance verification, rescheduling logic, follow-ups, and even priority-based routing based on urgency. The key factor is integration depth. Without EHR/EMR integration, capability stays limited

Q.2. Will patients feel uncomfortable speaking to an AI instead of a human?

A: Most patients don’t, at least not for front-desk tasks. They usually care about speed and clarity more than who (or what) is answering. If the AI voice bot responds quickly, learns them without repetition, and resolves the request without friction, the experience is often perceived as better than waiting on hold. However, in complex or sensitive cases, where human interaction matters, the system can still escalate seamlessly.

Q.3. How does the system ensure patient data remains secure and compliant?

A: Security isn’t considered as an add-on here. It’s part of the architecture. Healthcare-grade voice bot development includes HIPAA-compliant latency handling, encrypted voice data processing, and strict access controls across every interaction layer. Data isn’t just stored securely, it’s processed within controlled environments designed specifically for healthcare use. If a solution treats compliance as a feature instead of a foundation, that’s usually a red flag.

ALL ARTICLES

ALL ARTICLES