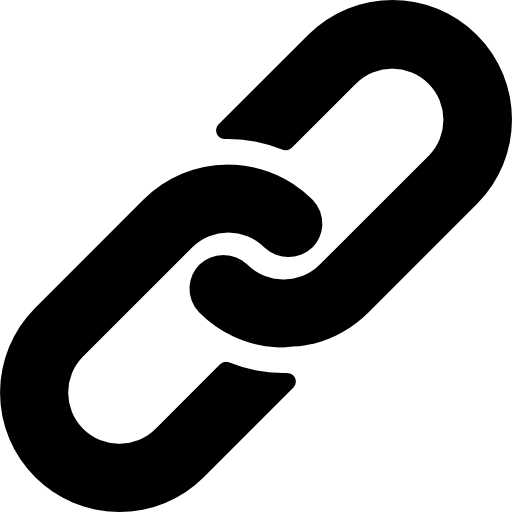

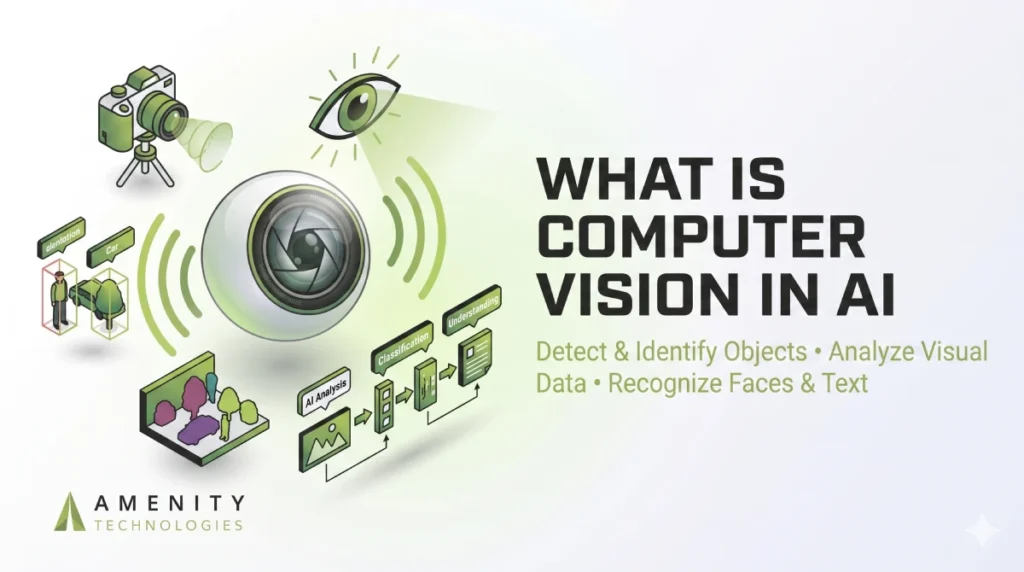

What Is Computer Vision in AI and How Does It Work?

People naturally understand what they see. A quick glance at a crowded warehouse or retail shelf is usually enough for the human brain to identify movement, objects, and irregular patterns. Machines work in a completely different way.

A computer vision system does not “see” the environment like a person does. It studies visual data through numbers, pixel values, shape patterns, and contrast changes. AI models then compare those patterns with previously trained examples before making a prediction.

This is why computer vision projects often look simple during demonstrations but become difficult once they enter real operational settings. Camera glare, unstable lighting, motion blur, and crowded scenes create problems that directly affect prediction quality.

The technology itself is powerful. The real challenge is making it reliable outside controlled testing environments.

In this detailed blog post, we’ll explain “what is computer vision in AI”, with detailed explanation so that you can understand better and gain benefits like improved operational efficiency, reduced labor costs, and higher accuracy.

What Is Computer Vision in AI?

Computer vision in AI is the process of enabling machines to analyze images, video streams, or live camera feeds and respond based on what they detect.

Instead of relying on human observation, businesses use computer vision systems to automate tasks that involve visual inspection, object identification, movement tracking, or scene analysis.

The technology is now used in industries that process large amounts of visual information every day. Some common examples involve:

- Manufacturing quality inspection

- Retail shelf monitoring

- Warehouse automation

- Traffic analysis systems

- Medical image diagnostics

- Facial recognition platforms

The primary goal is simple and straightforward. Minimize manual effort while enhancing consistency and operational speed.

Unlike humans, AI systems do not “understand” visuals emotionally or contextually. They recognize statistical patterns inside image data and generate predictions based on training.

That distinction is important because many deployment problems begin when businesses expect human-level adaptability from systems that fundamentally work through probability.

Why Traditional Sensors Hit a Wall

Older automation systems worked well in predictable environments. A sensor detected presence. A scanner confirmed a barcode. A machine triggered the next step.

That approach still works for simple workflows. Modern operations are different. Warehouses handle mixed inventory sizes. Manufacturing lines move faster than before. Retail stores need live visibility into customer movement, shelf conditions, and product availability.

Legacy sensors are binary. They detect that something is there. Vision is contextual; it identifies what it is and why it shouldn’t be there. A proximity sensor may detect movement, but it cannot determine whether a worker entered a restricted zone or whether a damaged package is moving down a conveyor line. Computer vision adds that missing layer of awareness.

Instead of responding to one signal at a time, the system evaluates the entire scene. It identifies relationships between objects, movement patterns, spacing, and abnormalities happening in real time. That changes automation completely.

Core Types of Computer Vision Systems

Not every computer vision system works the same way. The model used in a retail store will usually look very different from the one running inside a factory or warehouse. The use case decides the architecture.

Object Detection

This is one of the most widely used computer vision methods. The system identifies an object and also shows where it appears inside the frame. Logistics companies use it to track packages, manufacturers use it for defect inspection, and retailers use it for shelf monitoring.

Semantic Segmentation

Instead of analyzing the image as a whole, segmentation studies every pixel separately. That level of detail becomes useful in areas where precision matters, such as medical imaging, road mapping, or infrastructure inspection.

Pose Estimation

Pose estimation focuses on body movement and posture tracking. It is commonly used in workplace safety systems, sports analysis, and activity monitoring where movement patterns matter more than object recognition.

OCR (Optical Character Recognition)

OCR helps systems read printed or handwritten text from labels, invoices, serial numbers, packaging, and industrial documents. It is widely used in logistics, manufacturing, and document automation workflows.

Instance Segmentation

In busy environments, objects often overlap with each other. Instance segmentation helps separate them individually instead of treating them as one grouped object. This becomes useful in crowded retail spaces, warehouse operations, and traffic monitoring systems.

Choosing the right approach early usually saves time during deployment. A model designed for simple object detection may struggle if the actual requirement involves detailed segmentation or movement tracking.

Why Off-the-Shelf Models Fail on the Floor

A model that performs well during testing can still struggle badly once it reaches a live environment. That part catches many businesses off guard.

Public datasets are ‘sunny day’ scenarios. They don’t prepare a model for the 3 AM shift where the lux levels drop and the camera lens is covered in dust, and objects are clearly visible. Real facilities are rarely that stable.

One camera may face direct glare for two hours every afternoon. Another may collect dust near a conveyor line. In warehouses, even small things like plastic wrapping or reflective tape can affect detection quality.

The issue becomes obvious after deployment.

The model starts missing objects it previously identified without problems. Confidence scores fluctuate. False detections increase during busy operational hours. In some cases, inference quality drops simply because the camera angle changed slightly during maintenance work.

Edge deployment creates another layer of difficulty. Compressing models for smaller devices sometimes introduces Quantization artifacts that reduce prediction consistency, especially during fast-moving scenes.

This is why production systems usually need additional training using footage from the actual environment where they will operate. Teams often underestimate how much local conditions influence model behavior until the system goes live.

Spatial Awareness vs. Basic Recognition

Image recognition and object detection are often treated as the same thing, even though they solve different problems.

Recognition identifies what exists inside an image. Detection identifies both the object and its position within the frame. That location data is critical for automation systems.

A warehouse camera recognizing a forklift has limited value on its own. A system tracking the forklift’s movement, speed, and distance from workers becomes operationally useful.

Spatial awareness supports:

- Collision prevention systems

- Robotics navigation

- Safety monitoring

- Automated sorting systems

- Inventory movement tracking

Modern computer vision systems rely heavily on location-based analysis because automation decisions depend on spatial context, not just object labels.

Why Computer Vision Projects Break Down After Deployment

A computer vision model can look highly accurate during internal testing and still struggle the moment it enters an actual facility. Conditions inside real operations rarely stay consistent for long. Afternoon glare from warehouse windows, scratched camera lenses, fast-moving inventory, or even a slightly shifted camera angle can change how the system interprets visual data.

That is usually where businesses run into trouble. The model was trained for one environment, but daily operations introduce variables that were never part of the original dataset. Over time, detection quality starts becoming inconsistent unless the system is continuously refined using footage collected from the live environment itself.

This is where computer vision services and solutions become critical for maintaining consistent performance in changing environments.

The Hidden Importance of Training Data

The quality of a computer vision system usually depends on the type of visual data used during training. Models trained using perfectly lit images and clean object samples often struggle once they encounter real production environments. In warehouses or manufacturing units, objects may appear damaged, partially covered, poorly positioned, or moving faster than expected.

That difference matters more than many businesses realize. Teams getting reliable results typically build datasets from their own operational environments instead of depending entirely on publicly available samples. Training the system with real facility footage helps improve consistency because the model learns from the exact conditions it will face every day.

Computer Vision Is Quietly Reshaping Automation

Computer vision is no longer limited to experimental AI projects. Manufacturers now use it to spot damaged products before shipment. Retail staff use camera systems to notice empty shelves and misplaced products faster. Warehouses also depend on visual monitoring to keep track of inventory movement during heavy operational hours.

For many businesses, it has quietly become another operational tool rather than a future concept.

Develop Reliable Computer Vision Systems With Amenity Technologies

Most computer vision systems look impressive during testing. The real challenge starts after deployment, when lighting changes, movement increases, and environments become less controlled.

Amenity Technologies develops computer vision solutions built for actual operational conditions. From OCR systems to visual inspection and object tracking, the focus stays on practical performance, stability, and long-term usability inside real business environments.

FAQs

Q.1. Why do some computer vision models fail after deployment?

A: Many models are trained using clean datasets that do not reflect actual working conditions. Small environmental changes can reduce detection quality over time.

Q.2. Which industries are actively using computer vision today?

A: The majority of the growing industries like manufacturing, logistics, healthcare, retail, and security sectors are already using it in daily operations. Amenity Technologies builds solutions tailored around industry-specific workflows and challenges.

Q.3. What is the difference between image recognition and object detection?A: Recognition identifies what appears in an image, while detection also identifies where it appears. Our dedicated development team specializes in both types depending on operational needs.

ALL ARTICLES

ALL ARTICLES