Front-desk teams aren’t failing; they’re reaching a saturation point. Mid-sized clinics often experience an 18–27% call abandonment rate during peak hours, and most of those aren’t spam or low-intent inquiries. They’re patients trying to book, reschedule, or clarify symptoms before things escalate.

What slows intake isn’t just volume. It’s fragmentation. One screen for scheduling, another for insurance eligibility, sticky notes for callbacks, and verbal symptom reporting that is rarely captured in structured EHR fields. The system leaks time at every step.

(Burnout rarely shows up as complaints, it shows up as shorter conversations and incomplete notes.)

Where Intake Actually Breaks:

- Calls peak between 9:00–11:30, but staffing doesn’t scale with it

- Insurance capture delays the entire call flow

- Repeat callers due to dropped or incomplete interactions

Usually, the bottleneck isn’t the phone system. It’s everything behind it.

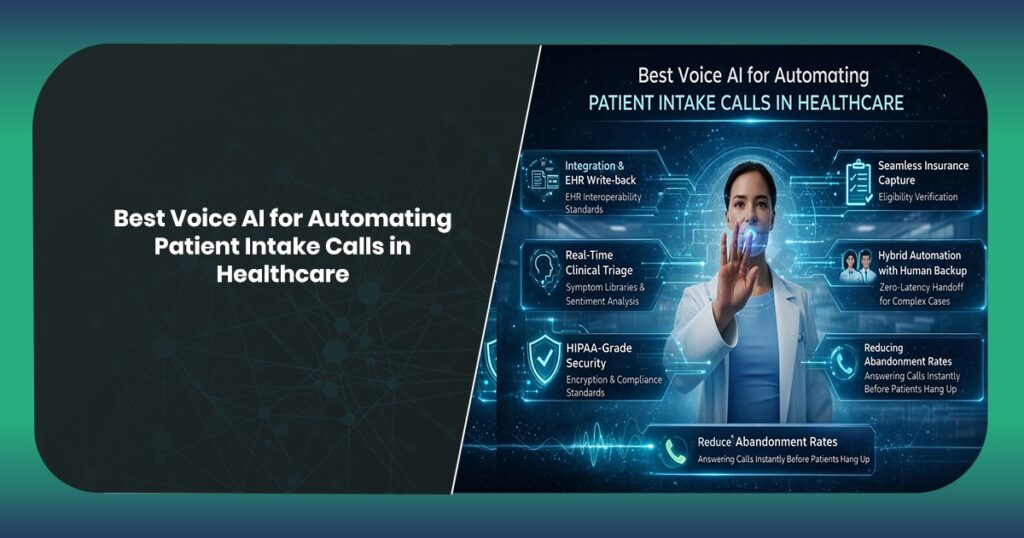

What Actually Qualifies as the Best Voice AI for Automating Patient Intake Calls

The clear difference shows up within the first week of deployment. Real patient calls don’t follow clean conversational paths, they overlap concerns, pause mid-sentence, switch topics, and often describe symptoms in ways that don’t align with clinical terminology. Systems that depend on rigid intent matching start to collapse under that variability.

That’s where most tools start to break.

The best voice AI for automating patient intake calls handles ambiguity without forcing the caller to adapt. NLU thresholds need to sustain accuracy even when inputs are incomplete or loosely structured, otherwise conversations drift into repetition loops or premature escalation.

Interoperability is the critical secondary failure point.

If captured information doesn’t move directly into EHR workflows through HL7/FHIR standards, staff end up correcting or re-entering data. That’s not automation, it’s deferral of work.

What qualifies as “best” is not just about features. Consistency under real clinical conditions matters the most, where no two patient conversations follow the same path.

Integration as a Non-Negotiable

If it lacks EHR write-back capabilities, it’s merely noise. A voice bot that captures data but doesn’t push it directly into clinical systems creates more work, not less. Integration starts at HL7 Fast Healthcare Interoperability Resources (FHIR) standards, but that’s just the baseline. What matters is how data flows after capture.

Demographics, symptoms, and appointment intent need to land in the correct fields, not in a generic note section someone has to clean up later. That cleanup step kills efficiency.

What Real Integration Looks Like:

- Structured field mapping into EHR/EMR (not flat notes)

- Real-time synchronization versus asynchronous batch processing

- Trigger-based workflows (eligibility checks, slot locking)

Disconnected tools create hidden work. Integration removes it before staff even notice.

Clinical Triage and Sentiment Analysis

Not all calls are equal. Some shouldn’t wait.

Voice AI in healthcare needs to do more than schedule. It has to triage imperfectly, but safely. Patients don’t say “acute respiratory distress.” They say “I can’t catch my breath since last night.”

Semantic mapping is critical.

The best voice AI for healthcare front-desk automation maps everyday language into clinical categories using symptom libraries and probabilistic models. Add sentiment analysis such as tone, urgency, and hesitation on top, and you start catching signals that structured data alone misses.

Remember vocal biomarkers of distress, such as pacing and repetition, not just words.

Systems that miss urgency create risk. Systems that over-escalate create chaos. The balance is where real value sits.

The HIPAA Security Layer

Security failures don’t announce themselves. They surface later.

Voice interactions carry PHI from the first sentence. Names, DOB, and symptoms sometimes insurance IDs spoken out loud in waiting rooms or shared spaces. That data needs protection at multiple layers, not just during storage.

Encryption in transit is expected. Encryption at rest is standard. The gap usually sits in access control and auditability, the ability to track who accessed data, when, and for what purpose.

Off-the-shelf bots rarely go deep enough here.

Tokenization during processing reduces exposure far more effectively than post-processing masking.

What Compliance Actually Requires:

- End-to-end encryption across voice and data layers

- Role-based access tied to clinical permissions

- Full audit logs for every interaction

One breach is enough. In healthcare, a single data breach is an irreparable blow to patient trust.

Reducing Abandonment Rates

Patients don’t wait through silence. They hang up.

Call abandonment isn’t random, it follows predictable patterns tied to wait time, call complexity, and perceived responsiveness. Even short delays create doubt: “Will someone actually pick up?”

Voice AI changes that immediately. Calls are answered instantly. Not routed, answered. This distinction plays an important role.

Immediate engagement effectively eliminates perceived latency to zero, even if backend processing is happening asynchronously. The interaction feels active, not stalled.

(Engagement in the first 3 seconds determines whether a caller stays.)

What Moves the Needle:

- Sub-1 second response time

- Continuous dialogue (no dead air gaps)

- Smart follow-ups for dropped interactions

Faster response isn’t just convenient anymore. It’s retention.

The Hybrid Model: When to Handoff to a Human

Automation breaks at the edges. That’s expected.

Some interactions don’t fit clean logic involving layered symptoms, emotional distress, unclear intent. That’s where escalation logic becomes critical. Not reactive, but pre-defined based on thresholds.

NLU confidence drops below a certain level. Sentiment spikes. Clinical keywords trigger flags.

Then the system hands off. The difference between good and bad handoffs is context, agents should never start from zero.

Zero-latency handoff means the human receives structured data, conversation history, and intent classification instantly.

Measuring ROI Beyond Efficiency

Time saved is visible but accuracy isn’t, until it breaks something.

Most clinics calculate ROI based on reduced call handling time or staffing efficiency. That’s incomplete. The bigger impact shows up in fewer documentation errors, cleaner patient records, and reduced claim rejections tied to intake mistakes.

Removing repetitive intake tasks changes how front-desk teams operate. Less fatigue. More focus. Lower turnover. Hiring and training replacements costs more than most automation investments.

What Actually Improves is data accuracy across patient records, staff retention over 6–12 months, and patient satisfaction tied to first interaction

Efficiency is the entry point. Stability is the long-term gain.

The Amenity Approach: Solving the Intake Crisis

Generic voice tools don’t survive clinical environments. They weren’t built for them.

Amenity Technologies approaches voice AI differently, starting from workflow, not technology. Intake isn’t treated as a call-handling problem. It’s a clinical data pipeline that happens to begin with a conversation.

That shift changes everything.

Systems are built around EHR interoperability, HL7/FHIR standards, and real intake scenarios, not ideal ones. Edge cases aren’t exceptions. They’re expected.

If your intake process still depends on hold queues and manual entry, the issue isn’t staffing, it’s architecture.

Schedule a technical workflow audit with Amenity Technologies. Map where your intake system is actually breaking, then fix it with a voice AI layer built for clinical operations, not generic automation.

FAQs

Q.1. Will patients actually feel comfortable speaking with an AI system?

A: Many patients prioritize efficiency over the specific nature of the interface. And they don’t even care as long as it works for them. If the system responds instantly, understands them without repetition, and gets them booked or routed correctly, adoption happens naturally. Resistance typically comes from poor implementations such as slow responses, misinterpretations, or robotic tone.

Q.2. What happens when the voice AI doesn’t understand the patient?

A: It shouldn’t start guessing when the patient’s intent or query is unknown. It should escalate. Well-designed systems operate on NLU confidence thresholds. When understanding drops below acceptable levels, the call is transferred to a human with full context, without restarting or repetition.

Q.3. Can voice AI safely handle clinical triage without creating risk?A: It can assist, but not replace clinical judgment. Voice AI identifies urgency patterns using symptom mapping and sentiment signals, but it operates within controlled escalation rules. High-risk cases are flagged and routed immediately to clinical staff.

ALL ARTICLES

ALL ARTICLES