Most voice bots perform well in controlled environments. Real-world usage has a different story to tell.

Production traffic introduces variables that demos never capture, including network instability, inconsistent audio quality, and unpredictable user behavior. Even small disruptions in SIP Trunking or compressed formats like G.711 codecs can distort speech before it even reaches the system. The result is simple: misheard inputs, repeated responses, and user frustration.

Scale introduces a second layer of stress. Systems that work smoothly at low volumes begin to slow down when handling hundreds or thousands of simultaneous calls. Session handling becomes inefficient. Requests queue up. Conversations lose rhythm and clarity.

Hidden delays create the biggest impact. A slow backend response increases TTFB (Time to First Byte), causing noticeable pauses. Voice interactions depend on immediacy. Even a half-second delay feels unnatural. Many voice bot development services focus heavily on AI capabilities while overlooking infrastructure readiness. That imbalance is where most systems fail.

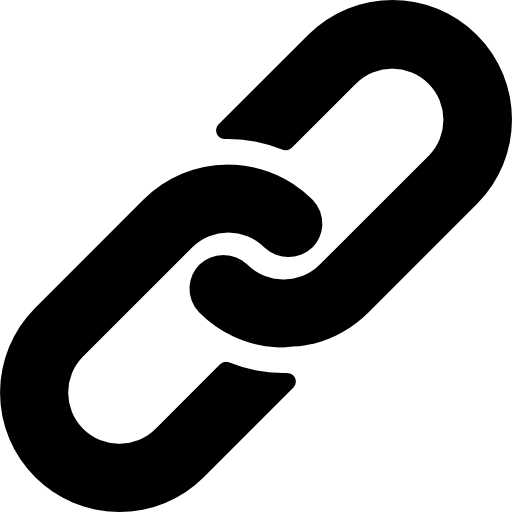

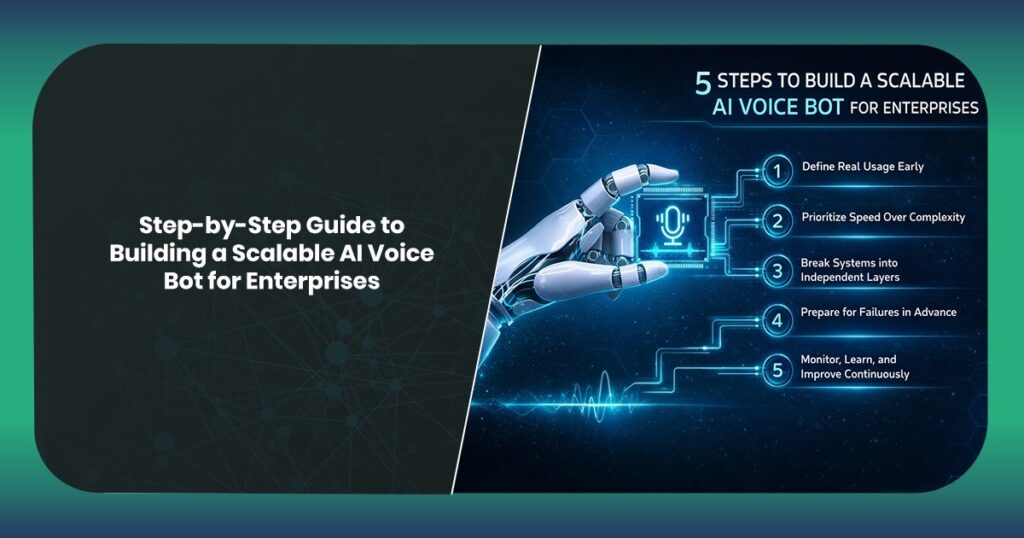

The Scalability Blueprint: 5 Steps to Architect Enterprise-Ready Voice Bots

When you’ve planned to develop a scalable AI voice bot for your enterprises, consider this proven, step-by-step approach:

Define Real Usage Early

Plan for peak demand, not average traffic. Consider concurrency spikes, seasonal loads, and multi-region usage before building the system. Early assumptions shape long-term stability.

Prioritize Speed Over Complexity

Faster responses create better user experiences than overly complex interactions. Keep conversations quick, relevant, and responsive. Users value clarity over sophistication.

Break Systems into Independent Layers

Separate speech input, processing, and response generation. This prevents delays in one component from affecting the entire interaction flow.

Prepare for Failures in Advance

External systems will fail. Networks will fluctuate. Use fallback responses, cached data, and human handoff mechanisms to maintain conversation continuity.

Monitor, Learn, and Improve Continuously

Real-time insights reveal performance gaps. Track response times, user drop-offs, and interaction patterns to refine the system over time.

The Tech Stack That Actually Scales

Once the blueprint is specified, execution relies on the right foundation.

Voice bots rely on three essential components. It includes speech recognition, understanding, and response generation. Each layer must perform consistently under real-world conditions, not ideal scenarios.

Speech recognition should adapt to imperfect inputs. Users speak with accents, background noise, and changing speeds. Systems must handle this variability without breaking the flow of interaction.

To understand intent, flexibility is essential. Predefined scripts quickly become outdated. Techniques such as Zero-shot intent recognition enable systems to handle new queries without constant retraining. This makes them more adaptable over time.

Response delivery must be quick. Systems should begin speaking as soon as possible rather than waiting to construct a complete answer. This reduces perceived delay and keeps conversations natural.

Architecture ties everything together. Decoupled systems ensure that delays in one layer do not cascade across the entire system. A strong voice bot development company focuses on this foundation rather than just adding features.

Latency is the Silent Killer in Voice AI

Delays can be a serious problem with communication. A user asks a question. There’s a pause. Not long. Just enough to notice. That moment decides everything.

Voice is different from chat. In text-based conversations, users tolerate delay. But, in voice-based chats, they expect continuity. Conversations are instinctive. Any break in rhythm creates doubt: did it understand me? Is it working? Should I repeat? That kind of hesitation compounds quickly.

Most latency issues don’t come from one place. They stack. A slight delay in speech recognition. Another in processing. Then a backend call that takes longer than expected. Suddenly, the response feels late, even if each component is only slightly slow.

Fixing this isn’t about chasing milliseconds in isolation. It’s about flow. Systems need to respond as if they’re thinking in real time, not assembling answers in the background. The difference is subtle. Users notice it immediately.

Choosing a Voice Bot Development Company That Goes Beyond Demo-Ware

Anyone can make a bot look good for five minutes. The real question is “what happens after that?”

Demos are controlled. Clean audio. Predictable inputs. No pressure. No scale. No failure scenarios. It’s a showcase, not a test.

Production is messy. Users interrupt. They rephrase. They speak halfway and change direction. Systems get slower. APIs fail. That’s where most solutions start to fall apart.

So the evaluation has to shift.

Don’t just ask what the bot can do. Ask what happens when things go wrong. Does it pause? Does it recover? Does it keep the conversation moving?

Look at how deeply it integrates. Surface-level connections work fine early on. Over time, they become bottlenecks.

Strong AI voice bot development services don’t just build features. They help build behavior under pressure. That’s what actually scales.

The Amenity Approach: Engineering for Real-World Conditions

No system is that perfect to run efficiently all the time. That assumption changes how you build.

At Amenity Technologies, AI voice bot development services are designed with that reality upfront. Not as an afterthought.

When something slows down such as an API, a database, or a third-party service, the system doesn’t freeze. It actually adjusts. It shifts the conversation. It responds with what’s available. The user stays engaged.

That’s where graceful degradation becomes practical, not theoretical.

Traffic behaves unpredictably too. You will be exposed to sudden spikes and uneven distribution. Instead of stretching everything thin, the system prioritizes what matters most—active conversations. Everything else adapts around it.

There’s also a balance in how intelligence is used. Not every interaction needs full AI processing. Some paths are structured. Some are flexible. That mix reduces unnecessary complexity and keeps responses fast.

Performance improves over time. Real conversations reveal patterns where users hesitate, where responses slow down, and where intent gets missed. Those signals drive continuous refinement.

And because everything connects deeply with existing systems, responses stay relevant without adding extra layers that slow things down.

Executive Summary: What Actually Matters

- Most systems don’t fail early, they fail under load

- Small delays change how users feel about the system

- Speed isn’t a feature, it’s the experience

- Real users don’t follow predefined paths

- Systems must adapt without breaking flow

- Failures should be invisible to the user

- Demos rarely reflect real usage conditions

- Integration depth affects long-term performance

- Simpler architecture often scales better

- Voice bot development services should focus on continuity, not just capability

The 2026 Verdict: Scalability as a Competitive Moat

Voice-based business interaction is becoming routine. Expectations are catching up fast. In today’s time, users don’t think about technology anymore. They notice how it behaves. Whether it responds instantly. Whether it feels smooth. Whether it works every time.

That’s where the gap is forming. Some systems will technically “work” but feel unreliable. Slight delays. Occasional confusion. Small breaks in flow. Individually minor. Collectively frustrating.

Others will feel effortless. Conversations move naturally. Responses come without hesitation. Even when something goes wrong, the interaction continues.

That difference won’t come from better features. It will come from better architecture.

Teams that recognize this early are already shifting their approach. Less focus on what the bot can say. More focus on how consistently it can respond.

Amenity Technologies works with organizations building for that standard, where performance holds up outside controlled environments.

Sometimes, a short technical conversation is enough to surface where things might break, and how to fix them before they do.

Scalability doesn’t stand out when it works. It stands out when everything else doesn’t.

Explore how Amenity Technologies can help you build voice systems that perform beyond controlled environments. Start with a focused technical discussion, clarity often begins with the right questions.

FAQs

Q.1. Why do most AI voice bots fail when scaled?

A: Most systems are built for controlled environments, not real usage. When traffic increases, issues like delayed responses, poor audio handling, and weak system architecture start to surface. The failure isn’t in AI, it’s in how the system is designed to handle load and unpredictability. We, at Amenity Technologies, understand this concern and build solutions that cope up with scaling requirements.

Q.2. How is a voice bot different from a chatbot in terms of scalability?

A: Voice bots operate in real time, which makes them far less forgiving. While chatbots can tolerate slight delays, voice bots must be designed effectively so they respond instantly. This requires stronger infrastructure, better handling of concurrent sessions, and tighter control over latency.

Q.3. What should one look for in a voice bot development company?A: You should focus on real-world performance, instead of just demos. Ask about scalability, latency handling, failure recovery, and integration depth. A strong voice bot development company will be able to explain how their system behaves under pressure, not just how it performs in ideal conditions.

ALL ARTICLES

ALL ARTICLES