Systemic failures rarely originate with major collapses; they begin with minor errors. A chatbot gives slightly incorrect financial guidance, nothing dramatic; however, it is enough to mislead a user. The user acts on it. That’s when things escalate.

In finance, there’s no buffer for “almost correct.” You’re dealing with regulations, liability, and user trust at the same time. A poorly designed system doesn’t just affect experience, it creates exposure. We’ve seen institutions spend more time fixing chatbot mistakes than building actual value.

That’s the accountability gap. And closing it starts with choosing the right finance chatbot development company, not the cheapest or fastest one.

The Billion-Dollar Handshake: Why Choosing a Finance Chatbot Company Is a Security Decision

Most vendors present Finance AI Agent development as a CX upgrade. Better engagement. Faster responses. That’s surface-level thinking. In finance, every interaction carries risk. It could be data exposure, incorrect advice, or compliance violations. You’re not buying a digital assistant. You’re approving a system that handles sensitive financial intent.

We’ve seen projects framed as marketing tools that quietly evolved into decision layers. That’s where things break. Because the vendor wasn’t built for security-first thinking. The real evaluation isn’t UI quality, it’s how the system behaves under stress, ambiguity, and regulatory pressure. If your partner doesn’t treat this as a security decision from day one, you’re already exposed.

Beyond FAQs: What AI Chatbots Must Handle in Finance (2026)

Basic FAQ handling isn’t enough anymore. Users don’t come with structured questions, they come with mixed intent. “Why was I charged with this?” can mean dispute, confusion, or fraud. The system needs to interpret context, not just keywords.

A sophisticated, AI based chatbot service for financial industry must handle multi-intent conversations, maintain omnichannel state retention, and escalate correctly when risk thresholds are crossed. It should recognize when a query moves from informational to transactional.

Here’s where many systems fail. They are designed to respond confidently without verifying context. In finance, this is truly unacceptable. The system must know when not to answer, and that’s harder than answering itself.

The Hidden Risks in Financial Chatbots: Compliance and Hallucinations

Hallucination should not be viewed as a mere technical flaw. In finance, it’s a liability event waiting to happen. A conversation bot generating an incorrect response about fees, policies, or financial products can trigger regulatory consequences.

That’s why guardrails become more important than intelligence. Systems must enforce accurate response boundaries, pulling only from verified data sources. PII (Personally Identifiable Information) masking becomes critical here. Sensitive data must never be exposed, even during multi-step conversations.

Then there’s regulatory drift. Policies change. Guidelines evolve. If your system isn’t updated continuously, it becomes outdated without anyone noticing. That’s where compliance risks quietly build. And by the time they are encountered, it’s already too late.

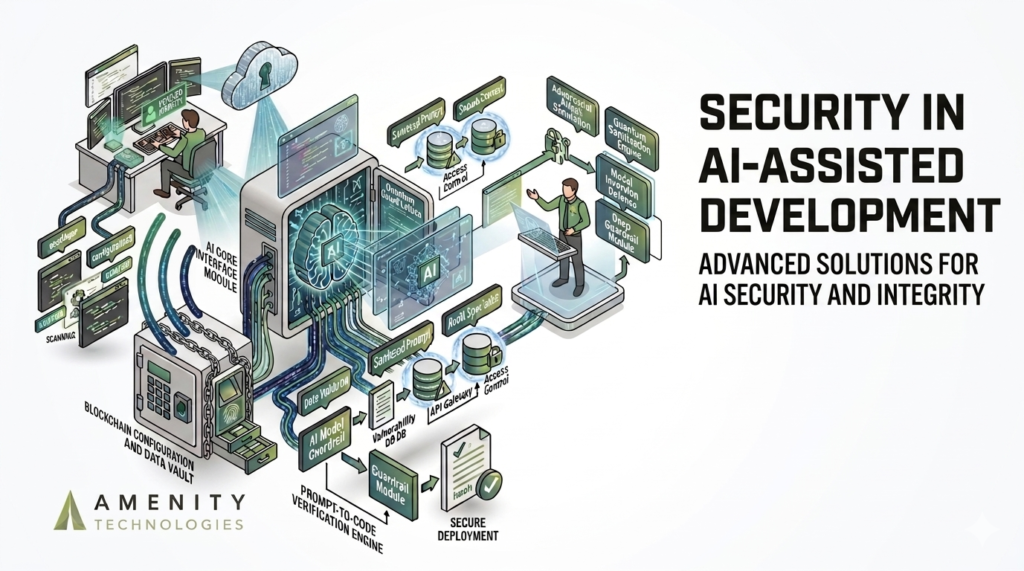

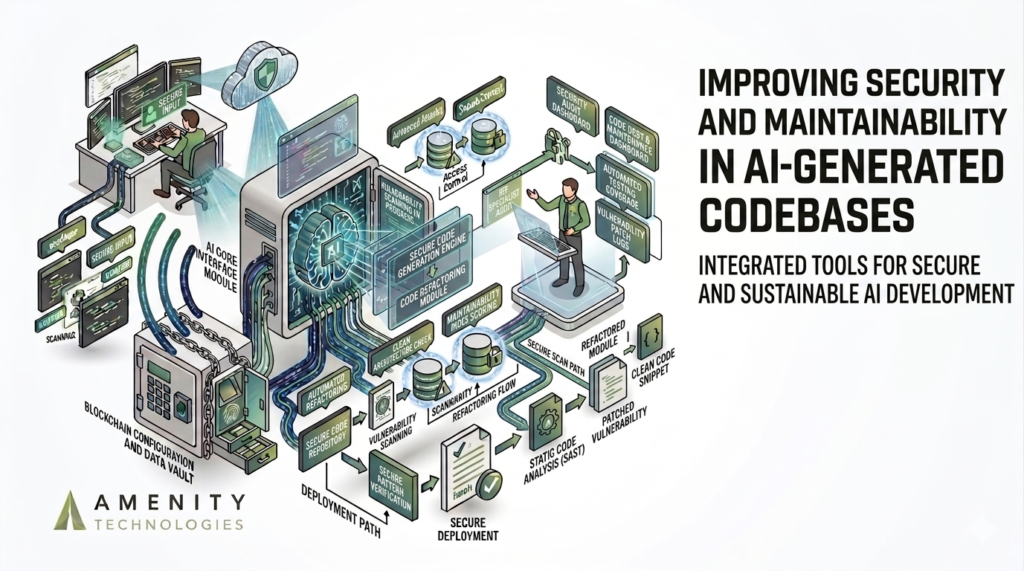

The Core Architecture: Why API-First Design Decides Everything

This is where most decisions should start.

If the system isn’t built API-first, it won’t scale properly. Finance environments are complex. There are core banking systems, CRMs, and fraud engines. The virtual support bot needs to connect into all of them without breaking workflows.

Latency overhead becomes a real issue here. If the system takes too long to fetch or validate data, users lose trust. In the worst case scenario, delayed responses result in incorrect assumptions during transactions.

A strong finance virtual support bot development company doesn’t just build conversation layers. It builds infrastructure that can handle real-time data exchange without compromising speed or accuracy.

Legacy System Integration: The Hidden Constraint No One Talks About

Modern systems are easy to integrate. Legacy systems are not.

Most financial institutions still rely on older infrastructure. COBOL-based systems, fragmented databases, limited API exposure. This is where many chatbot projects stall, not because of design, but because integration becomes complex.

We’ve seen vendors underestimate this repeatedly. They build a clean front-end experience but struggle to connect it to actual systems. That gap is the reason for operational friction.

The real challenge isn’t building the chatbot. It’s making it work with what you already have. And that requires experience beyond standard AI deployment.

The Implementation Roadmap: From Discovery to Pentesting

A proper rollout doesn’t start with the development process. It starts with discovery.

First comes the security audit, which involves understanding data flow, access points, and risk areas. Then system mapping, including what connects where, and how. Only after that does development begin.

UAT (User Acceptance Testing) is where most issues surface. Real users behave differently than expected. That’s where edge cases appear.

Then comes pentesting. Not optional. Required.

This phase tests how the system acts under stress, attack scenarios, and unexpected inputs. If your vendor skips or rushes this step, that’s a red flag.

Data Handling Discipline: Why PII Masking Isn’t Optional

Financial conversations usually involve sensitive data such as account numbers, transaction details, identity information. If that data is exposed, even briefly, the impact is immediate.

PII masking needs to be enforced at every level. Not just storage, but during processing and response generation. The system should never “accidentally” surface sensitive data.

We’ve seen systems fail here during edge cases, multi-step conversations where context carries over incorrectly. That’s where leaks happen.

A sophisticated, AI based virtual assistant service for the financial industry treats data handling as a core function, not an add-on.

Measuring What Matters: Ticket Deflection vs Customer Lifetime Value

Most teams measure success using ticket deflection. Fewer tickets = success. But that’s incomplete.

What’s important is what happens after deflection. Does the user resolve their issue? Do they stay? Do they engage further?

Customer Lifetime Value (CLV) tells a better story. If your AI assistant reduces tickets but increases churn, consider that you’ve solved the wrong problem. The primary goal isn’t just efficiency, it’s retention and growth.

Where Most Vendors Fall Short (And Why It Matters)

Many vendors highlight features because that’s what they promote the most. Better UI. Faster responses. More integrations.

But they miss operational reality. They don’t account for edge cases, regulatory updates, or system constraints. The outcome? A system that works well in demos but struggles in production.

We’ve seen this repeatedly.

The difference isn’t capability, it’s discipline. Building for finance requires a different level of precision. And most general AI vendors aren’t built for that.

Why Amenity Technologies Focuses on Surgical Precision

This is where positioning becomes critical.

At Amenity Technologies, we ensure that our approach isn’t broad but targeted. All systems are engineered with security, compliance, and integration as core principles. They’re not afterthoughts.

Our development team strictly focuses on:

- Controlled deployment cycles

- Strict data handling protocols

- Integration with existing infrastructure

The goal isn’t to build the biggest system. It’s to build a reliable one. That’s exactly what closes the accountability gap.

The ROI Reality: Risk Reduction Is the First Return

ROI in finance doesn’t start with revenue. It starts with risk reduction.

Avoiding compliance issues. Preventing incorrect responses. Reducing operational friction. These don’t always show up immediately in numbers, but they have significant impact over time.

Then comes efficiency. Then engagement. Then growth.

If you measure ROI only in short-term gains, you miss the bigger picture. The real value of chatbots in financial services shows up over time.

The Final Verdict: Your 30-Day Partner Evaluation

You don’t need six months to evaluate a vendor. Thirty days will be enough, if you ask the right questions.

Begin with testing their integration approach. Challenge their data handling. Push their system with edge cases and see how they respond.

Schedule a Security-First Demo with Amenity Technologies. Because in finance, you’re not just selecting a vendor. You’re choosing accountability.

FAQs

Q.1. How do we ensure compliance across changing regulations?

A: Through continuous monitoring and updates. Systems need to be designed for adaptability, not static rule sets, to handle regulatory drift efficiently.

Q.2. What happens if the chatbot gives incorrect financial guidance?

A: If it happens, it is a liability concern, not just a technical one. Systems must include strict validation layers and fallback mechanisms to prevent unverified responses from reaching users.

Q.3. How do we measure real ROI beyond cost savings?A: Look at retention, error reduction, and customer trust metrics. These indicators often reveal more value than simple ticket deflection numbers.

ALL ARTICLES

ALL ARTICLES